Introduction

Almost yearly in the winter, me, my brother, and my Dad play a series of Half-Life games, which ends up being recorded and uploaded to YouTube. We call the series the "Christmas Deathmatch".

To me, I'm always up for a good game, but I'm also always up for a good media project. Christmas Deathmatch isn't popular by any means, but it is a good way to practice video editing and producing to me. So I'm going to show some behind-the-scenes stuff that went on with editing 2017's Christmas Deathmatch.

In the past years, I was very reliant on Adobe After Effects to do my work for me. As I went through 2016 and 2017, I realised there was a much more efficient and automated way to do the editing I needed without professional video editing software. I only used Adobe After Effects for parts of the video that I actually had to edit. The rest, I managed to actually automate. All rendering to x264 was automated into a few shell scripts and ffmpeg. It's going to sound complicated when you read this, but in the end, it's just simply setting up files so that the scripts can encode everything automatically.

Software List

Here's the software used for 2017's Christmas Deathmatch:

- Adobe After Effects CC 2015.3

- Audacity

- ffmpeg

- Fraps

- GIMP

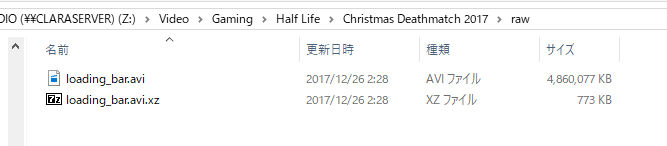

The Christmas Deathmatch videos are essentially 4 videos concatenated together:

- loading_bar.mkv - The loading bar... literally

- preview_glass.mkv - The map previews

- gameplay.mkv - The actual match gameplay

- ending.mkv - The ending with the links to the next/previous videos and copyright.

Step 1: Recording the Gameplay

What's a gaming video without gameplay? And more importantly (from a production perspective), how can we do it to where the video has the best framerate, compression, and colour matrices for viewing?

Fraps has always been reliable for recording gameplay. It's fast, but it chews up a shitload of HDD space. I'm sure we all knew this. The key, though, is that the footage is raw, uncompressed, 1080p 60 fps, and purely bgr24 encoded. This is very important, as it means we can do a lot of editing in post if we wanted. But, more importantly, it's also better whenever we encode it into a codec like libx264 or libx265.

The reason I mention this... in 2016, I recorded with Dxtory using libx264. Because of this, the files were already compressed prior to editing. So whenever I processed the final master, it was actually compressed twice. This resulted in poor quality. Since this time we are using true raw recording, we are ensured the maximum quality in the final master.

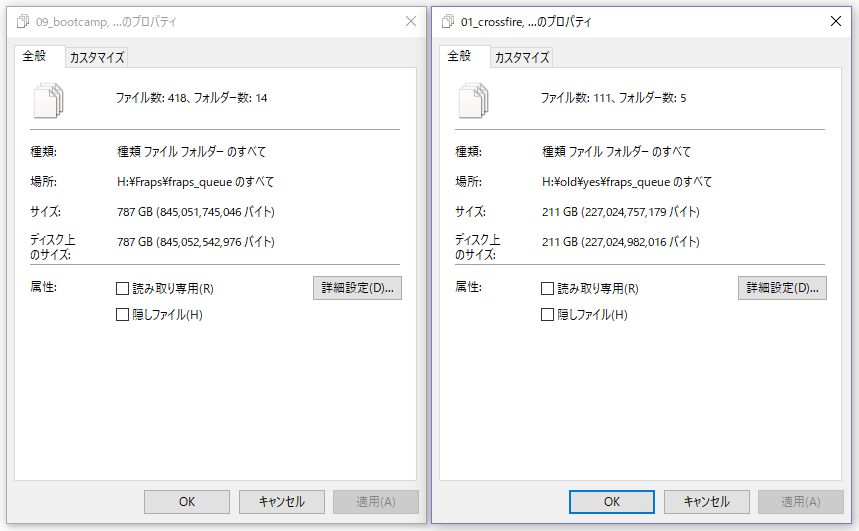

Of course, as mentioned prior, Fraps takes up a lot of space. 787 GB total for 14 matches of 15-20 minutes each. That's a lot! But wait... we actually had to re-record a few matches... and those took up an additional 211 GB (998 GB total). So in total, almost 1 TB of raw Fraps footage was used for this project!

Step 2: Preparing the loading screen

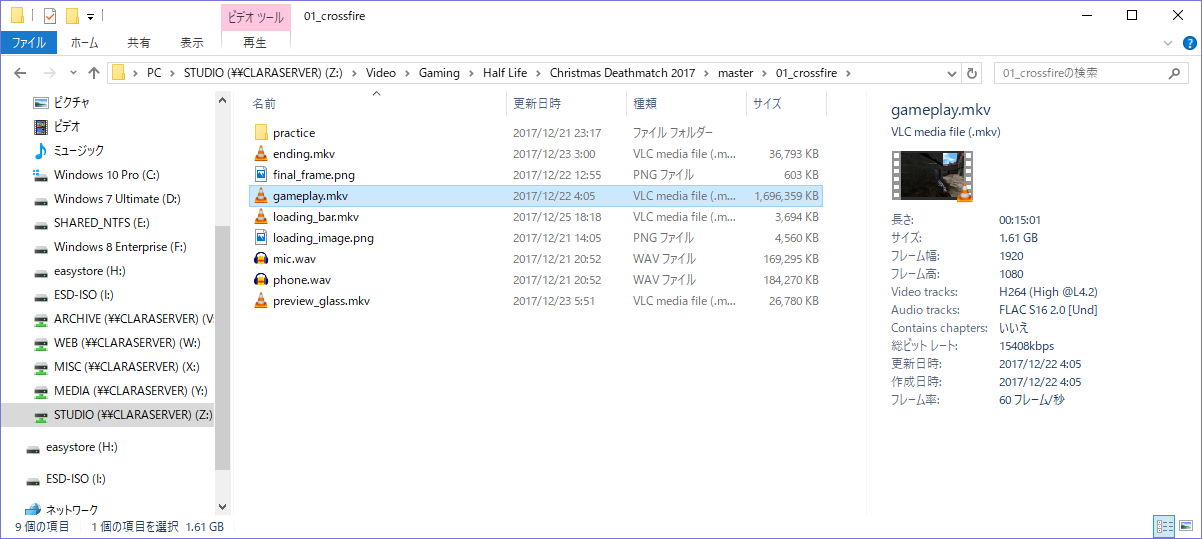

At the beginning of every video, a loading screen is shown for about 10 seconds. This is to give it the feel of Call of Duty: Modern Warfare 2. It looks like this:

Now, this is where the tricks of ffmpeg come in to play. Each loading screen is simply a PNG image. Now, we could simply go into Adobe After Effects and render out 14 videos with the loading bar added to the bottom manually. Or we could take a more flexible approach to automate it via ffmpeg.

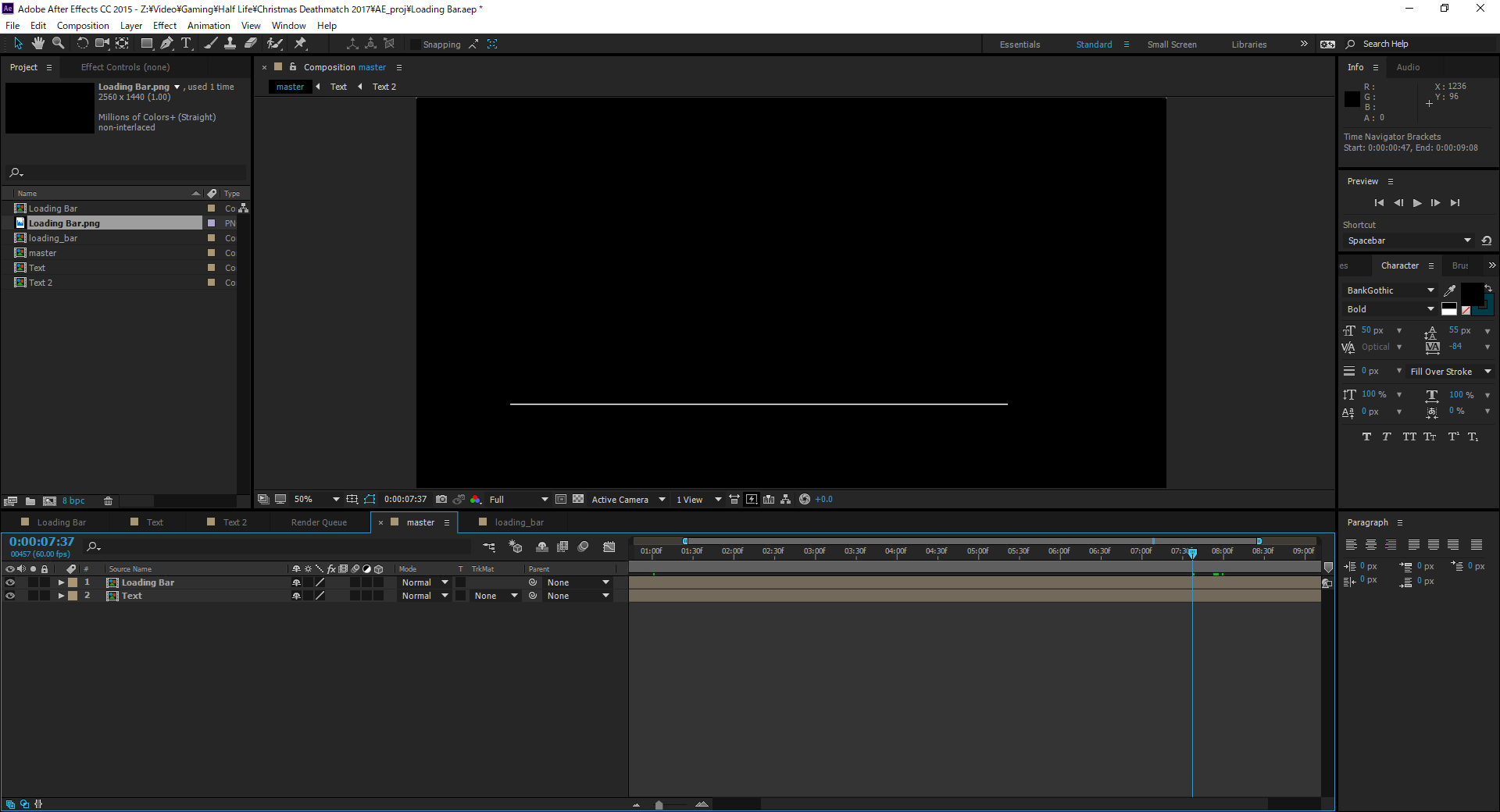

This method still, however, requires After Effects. But instead of exporting a video per loading screen, let's export only 1 video... of just the loading bar itself and everything else being transparent.

This render took seconds when exporting RAW with transparency. But the file was huge... around 4.63 GB. So I pulled out my unix tool known as "xz-utils" and losslessly compressed it. The result? The file became a mere 773 KB. I am not joking. That tiny file contained a 4.63 GB raw AVI file in it. Of course, it compressed really well because most of the pixels are transparent, and also because it's a raw file. XZ, Gzip, and Bzip2 like raw files.

Moving on, let's get to how I automated the loading bar footage. First off, I had to decide the encoding standard I was going to compress the final video in to. For the YouTube upload, I decided to stick with libx264 @ 18000k CBR, 48000 hz FLAC Stereo audio.

"But Clara! Why not just encode it in raw?"

The loading bar requires no editing. It's just generated and compressed. We can render and compress it in a single step via ffmpeg, as opposed to making a raw video and then compressing it later.

The Automation

Here's where my years of practicing ffmpeg pays off. I wrote a shell script (gen_loadingbar.sh), which you can view here: https://hastebin.com/obimiqitig.bash Here's the procedure the script does:

- Decompresses loading_bar.avi (remember we compressed it earlier?)

- Changes directory to the ones holding the image files

- Checks if we already rendered "loading_bar.mkv" for each match yet (if so, we can skip it)

- If a "loading_bar.mkv" doesn't exist, render the video via ffmpeg.

- After it is done, delete "loading_bar.avi", as we can just keep the xz compressed one.

How it works

The rendering process is worth mentioning:

ffmpeg \

-hide_banner \

-v quiet \

-stats \

-r 60 \

-i "loading_image.png" \

-i "../../raw/loading_bar.avi" \

-f lavfi \

-i anullsrc=channel_layout=stereo:sample_rate=48000 \

-shortest \

-filter_complex "[0:v]scale=1920:1080[bg];[bg][1:v]overlay,fps=60" \

-vcodec libx264 \

-strict -2 \

-pix_fmt yuvj420p \

-b:v 18000k \

-maxrate 18000k \

-bufsize 18000k \

-acodec flac \

"loading_bar.mkv"The method used is simply overlaying. It uses "loading_image.png" as the background, and then overlays the raw video of the loading bar on top. Remember, that raw video has transparency. The script also creates a silent audio track (because concatenating without audio causes issues). Since I am not encoding the video in raw, whatever I use here must also be used for all other videos in the final video, because otherwise it will not concatenate.

Step 3: The Map Previews

I didn't get away with not using After Effects this time... The map previews are very simple though. Simply record previews of the map and overlay custom images and text over it. It had to be exported raw, and then processed via ffmpeg to guarantee that it was compressed with the exact same codec, at the exact same bitrate.

Step 4: The Ending

Again, I couldn't get away with just ffmpeg here. BUT, the ending is a special case. The loading bar and the map previews were prepared before the gameplay was even recorded. However, the ending is special because it requires the final frame from the gameplay footage for that flash effect. Again, this had to be exported raw, and then compressed with ffmpeg.

Putting it all together... The "Compiler" (Concatenator)

This is where all of the fun pays off... when it actually comes together and just works. So this is what each directory looks like:

Now, let's use a magical script that I wrote, called "compile.sh" (Yes, I know it isn't a real compiler). Here's the source code: https://hastebin.com/ipakiyazel.bash

compile.sh Example: When files are missing.

The script will check if the 4 required files are there for concatenating into the final YouTube master. Here's an example of what it looks like when it runs and a few files are missing...

Script success

Of course, in the end, all videos were concatenated.

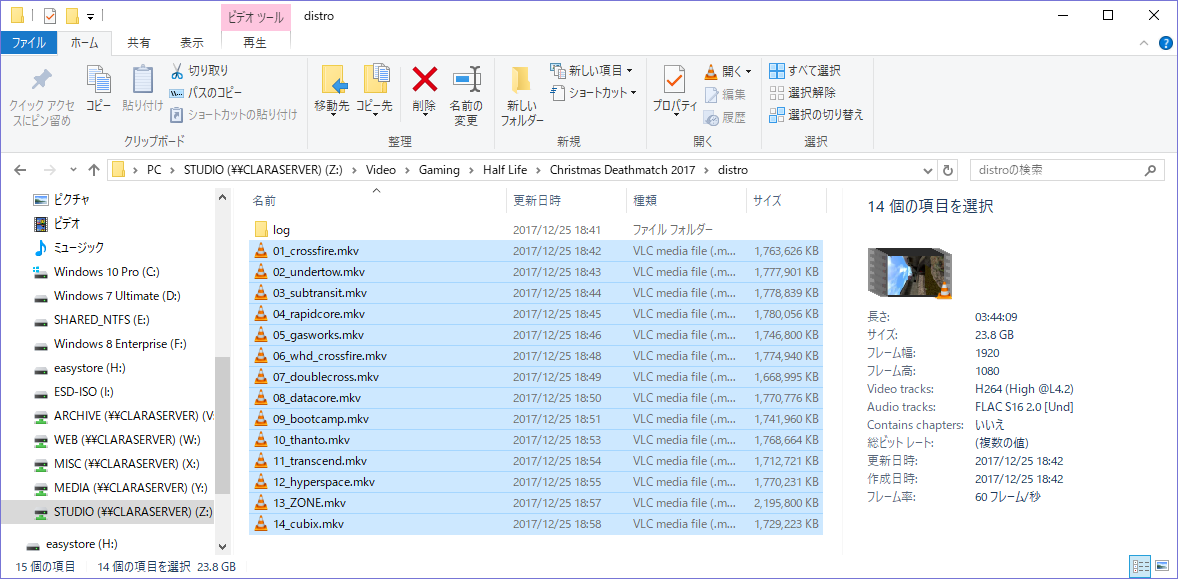

And because they were concatenated, the final YouTube masters are in the "distro" directory, production ready for upload to YouTube.

The goal, accomplished

While this all seems complicated, the scripts I wrote essentailly allow me to set up the files, and then have it all render out in a single command for future years of Christmas Deathmatch. I'm glad I was able to turn this project into a learning experience and also something where I can practice my media production skills, as it is something that I can definitely use in the future to my advantage.

You can watch the final encoded videos on YouTube here: